Shaun S. Wang

Dr. Wang received a bachelor’s and master's degree in mathematics from Peking University, and a Doctor's degree in statistics from the University of Waterloo in Canada.

In 1997, he was promoted to associate professor in the University of Waterloo and obtained tenure. In the same year, he joined SCOR Reinsurance in the United States and served as research director.

In 2005, he founded Risk Lighthouse LLC. He joined Georgia State University's Robinson School of Business as an Associate Professor in 2004 and was promoted to Thomas P. Bowles Chair Professor in 2008.

In 2013, he joined the Geneva Association of Switzerland as Deputy Secretary-General.

In 2015, he joined the Nanyang Technological University of Singapore as director of the "Insurance and Finance Research Center" and professor of the Department of Banking and Finance.

In 2019, he joined the Department of Finance of Southern University of Science and Technology as chair professor.

Andreas Bollmann

Andreas Bollmann has 24 years of experience in international reinsurance, with a strong focus on developing countries. In his current position as founding partner of Faber Consulting, he advises re-/insurers, development banks, governments and industry clients on regional markets, re-/insurance regulation and supervision, and provides in-depth non-life reinsurance underwriting expertise. With his broad intercultural experience, he offers a robust network in the international reinsurance industry as well as with regulators and other public sector entities, providing financial analysis alongside market research and competitor analysis. Andreas has more than 15 years of experience in development consultancy in the field of risk financing and risk transfer mechanisms, including technical design (underwriting, risk definition, trigger selection, legal and financial structuring, re-/insurance arrangements) and business planning.

He was involved in the development of several parametric climate risk insurance solutions on a large scale in the context of developing countries, amongst others for KfW Development Bank and the InsuResilience Solutions Fund. Andreas started his reinsurance career as Account Executive for Japan, first based in Munich and later in Zurich and Hong Kong. In 2004, he was appointed to Head of Swiss Re’s Korea branch in Seoul before moving to Singapore as Head of the Public Sector Business Development Team Asia/Australia. In 2013, he joined Faber Consulting AG from Saudi Re, a Riyadh based reinsurer, where he served as Chief Underwriting Officer.

He is a German national who holds a MSc from Duisburg University in East Asian Studies with majors in economics, political science and Japanese.

Esther Ying Yang

Esther Yang, co-founder of Risk Lighthouse LLC, is an active participant/observer of global financial markets. In her over 20 years of market experiences, she did derivatives trade executions, research, and investment strategy design. Over the year, Esther has touched many parts of the derivatives market, interest rates (US or foreign), FX, Equity, commodity, whether it is exchange-traded or OTC. She also helped her client to design their financial products with her market/quantitative knowledge on embedded option. With her expertise and insights, many successful products have been launched with reasonable profit and proper risk management.

In her recent years, Ms. Yang started working with founders/investors on investing in early-stage technology startup. She has been more productive and active in doing research work in finance. Her recent joint paper co-authored with others during global pandemic, titled "Government Support for SMEs in Response to COVID19: Theoretical Model Using Wang Transform” has won 2021 China Finance Review International’ Research Excellence Award. Ms. Yang is also the sole author of “Esther’s newsletter” , which is a macroeconomic/finance commentary with investment focus. Ms. Yang holds bachelor’s degrees in biology from Beijing University, and a master’s degree in actuarial science from the University of Waterloo. She is a Fellow of the Society of Actuaries and a CFA charter holder.

Jing Rong Goh

Dr. Goh is an expert in actuarial science, data science, blockchain, trust technology, climate risks, financial mathematics, statistics, and econometrics.

Dr. Goh previously co-founded a fintech startup that was acquired by an insurance broker and is now actively investing in and advising start-ups.

Dr. Goh was also the Lead Data Actuary and Research Fellow for the Cyber Risk Management Project under the Insurance Risk and Finance Research Center where he led a team in conducting data science research applied to cyber risk management. Dr. Goh has prior lecturing experience at Nanyang Technological University and Singapore University of Technology & Design. Dr. Goh is well-connected with the industry and volunteers actively on various non-profits organizations and societies. In 2022, Dr. Goh joined the School of Economics at Singapore Management University as a faculty. Dr. Goh obtained his Ph.D. and Bachelors from Nanyang Technological University in 2019 and 2016, respectively. Dr. Goh is also a Fellow of the Institute of Actuaries (FIA) and Chartered Enterprise Risk Actuary (CERA).

Linda Sew

Linda brings with her more than 20 years of experience from the insurance industry where she worked with several multi-national insurers and reinsurers. Her corporate experience includes risk management, pricing, underwriting, technical accounting and reserving.

In her consulting life, she specialises in pricing innovative products and deriving tailor-made risk management strategies. Past projects covered topics such as New Mobility, cyber risk and pandemic business interruption. Linda's clientele includes both multi-national corporations and government institutions. Her engagement with government institutions often include development and implementation strategies for national insurance schemes, including cyber and natural catastrophe risks.

Linda is a Fellow of the Casualty Actuarial Society (FCAS) and the Singapore Actuarial Society (FSAS), having graduated from the Nanyang Technological University of Singapore with a degree in Actuarial Science. Linda has lived and worked in both Singapore and Germany.

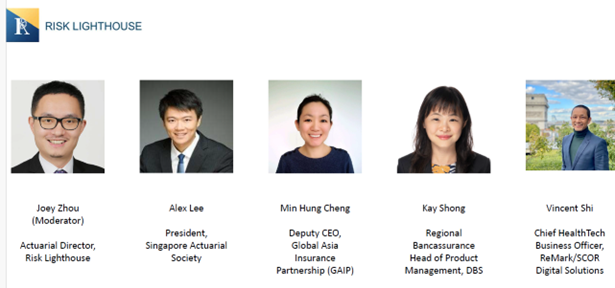

Joey Zhou

Joey is a seasoned professional in the insurance industry, with nearly 20 years of extensive and diverse experience across insurance, reinsurance, and government sectors. Joey's primary areas of passion and expertise are climate risks, mortality and morbidity risks, and inclusive insurance solutions.

Joey is active in research and development in the actuarial community. Since 2014, he has held a council member position in the Singapore Actuarial Society (SAS), where his roles have ranged from leading sponsorship and research committees to organizing workshops and conferences. Additionally, he actively contributes to an executive education program of the Institute and Faculty of Actuaries (IFOA), preparing actuaries and risk managers globally to navigate the complexities of climate risks. He is a member of the Personal Injury (Claims Assessment) Review Committees with the Supreme Court of Singapore and MAS, which successfully developed Singapore's first Actuarial Tables for Personal Injury and Death Claims.

He received his actuarial training at the University of Melbourne and an MBA from the National University of Singapore. He is a Fellow of the Institute of Actuaries (FIA) and a certified Financial Risk Manager (FRM). He also holds the Certificate of Climate Risk and Sustainability awarded by the Institute and Faculty of Actuaries (IFOA) and the Certificate of Sustainability and Climate Risk from the Global Association of Risk Professionals (GARP).